Namaste! Recently I started a new project. Together with Deutsche Welthungerhilfe e.V. I am investigating how we can reduce world hunger with Deep Learning. Yes, I expect Deep Learning to have a huge impact on global humanitarian work. In the near future, that is.

The idea is very straightforward.

The Child Growth Monitor is a mobile application. What does it do? Let me quote from its GitHub-repo:

„Hunger or malnutrition is not simply the lack of food, it is usually a more complex health issue. Parents often don’t know that their children are malnurished and take measures too late. Current standardized measurements done by aid organisations and governmental health workers are time consuming and expensive. Children are moving, accurate measurement, especially of height, is often not possible.“

Bottom line: There are standard processes to measure malnutrition. But if there is too many children, those processes are too time consuming. This is usually the case in underdeveloped countries. Thus it would make sense to automatize this as much as possible. Preferably with a mobile app. And beyond that, especially with Deep Learning!

We are very optimistic that Deep Learning would be a game changer here. Now our main question is: What are our requirements?

How much data to we need?

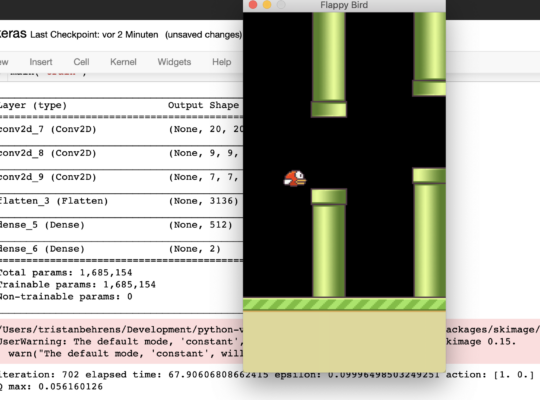

You see, the idea is very simple. Just let the children stand in front of a camera and measure her or him automatically with a Deep Neural Network. For training you need data. How much? We do not know yet. Remember, that training extensive image processing Neural Nets, requires a lot of images. I am talking about ten-thousands up to millions.

Currently a database is being filled with data. People are currently supporting by using the mobile app to generate the date, which is then stored in a secure and compliant place. But again… How much data do we really need? Now, we do not know it yet. How to find out? Let us do some experiments!

How to preprocess the data?

This is a crucial point! In its essence our data is inputs and outputs. The outputs are simple. They include but are not limited to height and weight of children. The inputs are a little bit more complex. But only a little bit. We have access to images and point-clouds. That is already quite something!

But wait… I guess you are familiar with images. But what are point clouds? Have a look at this image by John Cummings. It shows Monmouth Castle in Wales:

A point-cloud is a huge set of points. Each point has at least three coordinates in space (x, y, z) plus some other features like color and confidence. Point clouds represent objects in 3D-space, which adds a lot of information to our scenario.

Now comes the crucial part. We want to feed our data into our Neural Net. How to preprocess that data? Well, outputs are easy. We do a simple regression here, so there is no need for something fancy. Which options do we have for the inputs? Here is a non-exhaustive list:

- Individual images of the children: Here we would just go down the standard road of image processing with Deep learning. This is the easiest of all. We could come up with our own Neural Nets. Or we could do some beautiful transfer learning.

- Plain point clouds: Point clouds are just streams of floats. Some coordinates plus some additional data. Almost like sound-waves, but structured in a different way. We could feed point clouds into a Neural Net right away. Of course, after ensuring a fixed length. Different point clouds have a different amount of points. Removing some points and padding might be necessary.

- Voxel grids: Voxels are used in both in computer games and in MRIs. Voxel grids are means to discretizing point-clouds. Point clouds would have different sizes. But the voxel grids would ensure a fixed size. And on top of that, voxel grid maintain metric space.

- Any of the above with sequences, for example videos.

Which one is best? We do not know yet! But we are going to find out!

Let us kaggle.

Remember, Kaggle is your home for data science. The platform is a host for a lot of data-sets. And its main purpose is to organize challenges. Yes, you can make a living by just winning Kaggle-competitions!

We expect that using Kaggle would contribute to the overall success of the project. We intend to create a dataset and specify a challenge in order to learn more about the data and how Machine Learning can be used successfully on that data.

Cudos to ZDI Mainfranken Würzburg!

It is not a secret, that I do most of my work in Würzburg, Bavaria. Recently the Zentrum für Digitale Innovationen (ZDI) Mainfranken opened. This was a really great event for the region. It is obvious that the city is very motivated to take a huge part in digitalization.

We had our kick-off in the cube, the lab for founders. We made ourselves comfortable and started working right away. The building is excellent for brainstorming ideas and implementing prototypes. We had our first Neural Net up and running in no time.

The end is just the beginning.

Well! There is still a long pathway to go with the whole project. But I am very confident that Deep Learning will contribute a lot.

Stay in touch.

I hope you liked the article. Why not stay in touch? You will find me at LinkedIn, XING and Facebook. Please add me if you like and feel free to like, comment and share my humble contributions to the world of AI. Thank you!

If you want to become a part of my mission of spreading Artificial Intelligence globally, feel free to become one of my Patrons. Become a Patron!

A quick about me. I am a computer scientist with a love for art, music and yoga. I am a Artificial Intelligence expert with a focus on Deep Learning. As a freelancer I offer training, mentoring and prototyping. If you are interested in working with me, let me know. My email-address is tristan@ai-guru.de - I am looking forward to talking to you!